What do you get when you mix two projects, ten people with 18 data SIMs, five different focaccias, a power outage and a barbeque?

Our latest replication sprint of course, which happened earlier this year in Istria, Croatia, as part of our Matchbox Program. Now that some time has passed, we’re in a better position to reflect on what worked well and what’s next for us.

Interested in having The Engine Room run a replication sprint for your organisation? Get in touch – our digital door is always open.

What are replication sprints?

Replication sprints are designed to remix technical components of successful projects in other contexts, and embedding that technology into an effective strategy for systems change.

Replication sprints are based on the premise that no copy and paste of standards or code can replace tailored technology and data support, but that reusing common components of technology and data tactics that have succeeded in other contexts can dramatically reduce costs.

Earlier this year, we spent a week together with two organisations, Opora from Ukraine, and K-Monitor from Hungary, to replicate the crowdata microtasking platform we built for former Matchbox partner, ¿Quién Compró?.

The preparations

Back when we started our partnership with ¿Quién Compró?, they needed to develop a system for digitising expense receipts submitted by their Members of Congress, but scanning and manually reviewing each PDF was a time-consuming and error-prone exercise.

After a review of all available crowdsourcing platforms, we decided to reuse and build on the CrowData codebase (originally developed by La Nacion in Argentina) to help liberate their data.

In order to revamp and customise the original ¿Quién Compró? platform for K-Monitor and Opora, we needed a group of domain experts– designers, front-end and back-end developers, UX and data specialists. Our all star team included Marit Brademann (Scrummaster), Krzysztof Madejski (Back-end), Dimitri Stamatis (Design), Alan Zard (Front-end), and Vanja Bertalan (UX/Data).

They supported us during these 5 days in our effort to replicate the Quien Compro platform for our new partners – at every stage of the data pipeline, interaction design and development.

Attila Juhász and Levente Szadai were project leads for K-Monitor, and Grygorii Sorochan was project lead for Opora.

The event

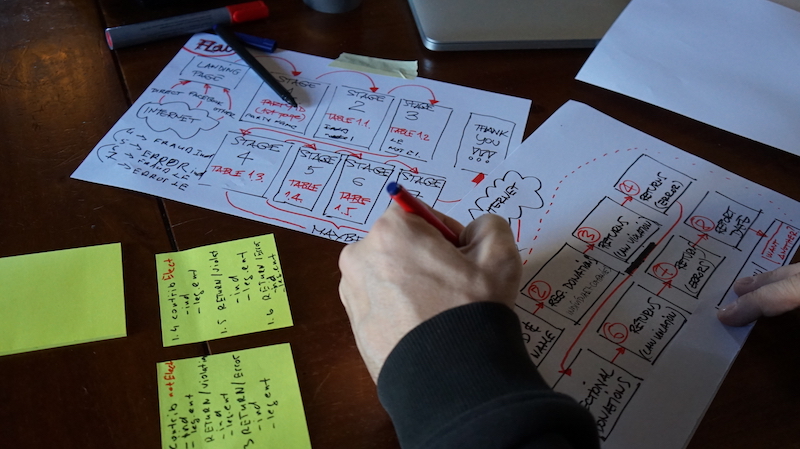

The week of the replication sprint was jam-packed (we blogged about it daily here), as everyone worked tirelessly to address the unique challenges and needs of each organisation.

So how did it work in practice?

- We dedicated an entire day to creating a feature set (a functionality wish-list for the new platforms) and identifying the needs and goals for each project.

- We organised tasks around user stories with a Kanban system, a visual process-management system which aids decision-making about what, when and how much to produce.

- We assessed each organisation’s needs, aggregated features, and discussed priorities.

- Marit walked us through the process of creating user stories and we built a Progress Wall, our own system for task management during the whole week.

- Guided by the Wall, Dimitri designed the landing pages, while Krzysztof refactored the back-end, in other words he updated the the code to make it more robust and simplified.

- We created the design assets for K-Monitor, and Alan began implementing the front-end, while K-Monitor and Opora began translating the buttons and text into Hungarian and Ukrainian, respectively.

- On the final day, everyone worked at break-neck speeds to finish K-Monitor’s platform and finalise Opora’s data-scraping scripts, code that sifts through Opora’s PDFs and pulls the data into spreadsheets automatically.

- Throughout the week, we also made time for meaningful discussions on advocacy strategy with Seember Nyager and Tamara Puhovski from the Croatian NGO ProPuh. They brought depth and hands-on experience to each of the organisations’ strategies, and helped them to put the outputs from the replication sprint into a realistic timeline.

The end products

K-Monitor now has a beautiful, customised microtasking platform. They managed to launch their project of liberating asset declaration of Hungarian MPs mere days after the sprint.

Opora left the sprint with powerful scripts for scraping and transforming their PDFs into smooth and simple datasets. We also developed the UX, design, data model and back-end structure for crowdsourcing the more stubborn PDFs. Our technologists and designers worked hard to finalise Opora’s platform post-sprint, and we will be working to bottle up some of the magic for other future partners.

What we learned for the future

- Getting familiar with the technology we’re replicating takes time and patience. Ensuring domain experts have time before the sprint to explore the codebase means more time for stress testing, and learning about the various quirks and bugs. This will also mean the project leads will have more hands-on time with their shiny new project, which in turn allows for more time focusing on strategy and less on understanding the quirks.

- The earlier our domain experts talk with the groups who built version 1.0 of the platform, the better. Connecting the developers of the original platform with our domain experts well before the sprint will reduce stress and lingering questions about functionality and development decisions.

- Connectivity will always be a problem. Warm and inspiring venues do not always boast the fastest or most stable internet. The main take-away here is: always come prepared with copious internet options, and always accept that the internet, much like a puppy that keeps chewing all your shoes, will inevitably be a frustrating part of the experience you can’t live without.

- Ample time for testing results in more intuitive platforms. In an ideal situation, we would have more than one day dedicated to testing. We weren’t able to test the platform as thoroughly as we would have liked, but prioritising testing throughout the week will reduce the rush after the sprint and make for a smoother experience for users.

What’s next?

It is clear by now: we will keep doing replication sprints, as the format works really well. These events have concrete impact, participating organisations learn and gain a lot in a very short amount of time, and the experts find that the format makes the most of their expertise and gives them opportunities to learn quickly as well.

Replication sprints save considerable resources for smaller organisations and improve existing open-source tools, which in turn help others building similar projects. They also allow for clear blending of technology development, data acquisition and processing, and effective strategy. In sum: replication sprints achieve one of our core aims at The Engine Room: they support local and national partners to demonstrate what is possible when their advocacy aims are boosted by data and technology strategy and support.

Want to hear more about these types of projects as they unfold? Join our newsletter, follow us on Twitter, and send us an email and find out about our next replication sprint!