Following my last post about the ‘Innovative Uses of Technology in HIV Clinical Trials’ event, I wanted to share three tactics for managing responsible data complexity in program design. With a room full of PhDs, Mds, MPHs, MBAs and MScs (and sometimes a combination of several of each), what could the responsible data perspective offer?

The landscape of technology is shifting so rapidly that it can be challenging just to keep up with it, let alone stay ahead. Research institutions and researchers are doing their best to operate within ethical and responsible bounds, but the mechanisms they used to rely on – like institutional review boards (IRBs) – are struggling to keep up.

I suggested participants consider three tactics for developing more adaptive thinking about responsible data within research and project design:

- responsible data red teaming

- future-proofing

- planning for the human element

Responsible data red teaming

This term is borrowed from the technology sector. Loosely defined, it means actively thinking about your project design from the perspective of an adversary, and ‘war-gaming’ to test how you would respond to various different scenarios. In this case, this involves thinking about how things might go wrong during the collection, storage, processing, and preservation of data.

Here are some potential actions and issues to consider in responsible data red teaming.

Ask yourself:

- Have I identified and engaged people on my team who are good red team-members? (Think the vocal, pessimistic devil’s advocate).

- Have I sufficiently captured threats that might affect my project, and how likely they are?

- Have I done a risk analysis for my proposed approach, built upon a do-no-harm principle?

- Have I spent enough time planning how different scenarios for the project might play out?

- Before introducing new technology components, have I considered:

- Data minimisation?

- Roll-out and maintenance from a user perspective?

- How new technology affects existing processes like consent?

- Have I made a clear risk plan for high-impact, low-probability events?

Future-proofing

This is another term borrowed from technologists, who attempt to design systems and tools that are resilient to future changes in technology. From a responsible data perspective, future-proofing involves planning technology and data processes that mitigates risks that could emerge following changes in the data and technology landscape.

Why is future-proofing necessary? Many IRBs consider a fingerprint ID (to authenticate the identify of clinical trial subjects) to be ‘anonymous’ if there is no national fingerprint registry in the country where the trial is being conducted. But what if that country later adopts a fingerprint registry? What could happen to the sex workers in your study? How might the data you collect be used against them if cultural, political, or technical landscapes change? And with this in mind, is it still appropriate to use it as an identifier, or to collect it at all?

Ask yourself:

- How is the operating environment in which I work likely to change in the next five years, legally, technically and culturally?

- How could the data I am collecting be re-used if and when the operating environment changes?

- What will happen to the data after the project is completed?

- What technical expertise do I need to plan effectively for these issues?

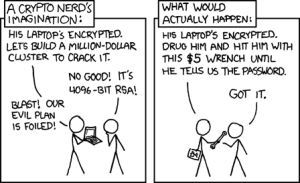

Planning for the human element of systems

Even the most technically well-designed systems can be undermined by the humans they are meant to serve. When planning data and technology infrastructure and processes, the human element is key to consider.

Ask yourself:

- How can you minimise human error in even the best-designed informations security systems and best-laid responsible data plans?

- Do your security and responsible data strategies require:

- Training?

- Awareness-raising?

- Behaviour change?

- How can you provide adequate information to patients so they can give informed consent?

What next?

Overall, responsible data is a process, not an outcome. The tactics above are ways of thinking that can be incorporated into all types of projects. If you are looking for feedback on your project design and want support, join the discussion list and pitch your problem to the responsible data community of practice.